Components, Features & Abstractions

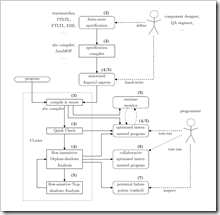

The figure on the right (click to enlarge) gives an overview of the Clara framework and the software development process that surrounds it. Clara’s workflow starts on the top right of the image. A developer first provides Clara with a set of annotated aspects that implement runtime monitors for typestate properties.

Runtime monitors

The runtime monitors have to be written in AspectJ. Software developer can obtain such AspectJ aspects either by hand-writing the aspects directly or, more commonly, by defining a set of finite-state specifications which are then translated into AspectJ aspects using a specification compiler. Common specification compilers that generate AspectJ and are compatible with Clara are tracematches and JavaMOP, but others are possible too.

Aspect annotations

Just providing plain AspectJ aspects is not quite enough, however. So that it can conduct its static analyses, Clara needs to know the finite-state property that the runtime monitor checks at runtime. While Clara could, in principle, analyze the provided aspect to determine the monitored property from there, AspectJ aspects are generally Turing complete and can be arbitrarily complicated. Clara’s major design goal was to de-couple the hard task of generating efficient runtime monitors form the second hard task of performing static typestate analysis. Hence, the monitoring code should be allowed to be arbitrarily complex and specialized.

Clara hence supports an annotation language that preserves additional information about the finite-state property at hand in a simple human-readable and machine-readable syntax called Dependency State Machines. Users can add these annotations manually to the hand-written or generated AspectJ aspects. However, we have implemented extensions to tracematches and JavaMOP that generate these annotations automatically from runtime-monitor specifications. The annotations to the aspects preserve essential information about the combinations of events that may lead to a property violation and potentially also the order in which the events have to occur to violate the property.

Clara’s workflow: weave, analyze, re-weave

The user provides Clara with a set of annotated monitoring aspects and with a “base program”, the program under test. We implemented Clara as an extension to the abc compiler, an open research compiler for AspectJ. Clara first parses the annotated aspects and the supplied program. (Users can provide the program in source or in binary form, but aspects have to be supplied as source code.) Clara then first weaves the monitoring aspect(s) into the program under test. During this process, the abc compiler computes a so-called “weaving plan”. This plan contains information about which pieces of advice match where in the program. Clara then next invokes its static-analysis engine. The figure shows three analyses that Clara has built-in:

- the syntactic Quick Check,

- the flow-insensitive Orphan-shadows Analysis, and

- the flow-sensitive Nop-shadows analysis.

Generally, researchers can add any number of static analyses to Clara and execute them in any order. The three pre-defined analyses are just examples. These analyses analyze the program with increasing precision and at increasing compile-time cost.

The task of each static analysis is to identify “irrelevant shadows” in the program, i.e. instrumentation points for which the analysis can prove that this point does not need to be monitored at runtime because it can (1) not contribute to a property violation and (2) cannot prevent a property violation from occurring. Using a very simple API, an analysis can inform Clara that it identified a shadow as irrelevant. When this happens, Clara automatically marks this shadow as “don’t weave” in the weaving plan.

When all analyses have finished, then Clara uses abc to un-weave the program, restoring its original code, and then re-weave the program with the updated weaving plan. The resulting program will invoke the monitoring aspect only at those code locations that no static analysis marked as irrelevant. Usually, this program will run much faster than a fully instrumented program that updates the runtime monitor at any program point that the aspect’s pointcuts describe. In cases where the analyses in Clara could prove the program safe, i.e. marked all shadows as irrelevant, the resulting program is even free of instrumentation, i.e. it runs without additional runtime overhead.

Optional Collaborative Runtime Verification

In certain cases it may happen that the instrumented program shows a large runtime overhead despite all static analyses. This may happen if some piece of code that does indeed violate the stated property resides in a hot loop, or if the analyses are too imprecise to determine that the code in this loop is actually safe. When this happens, the programmer can opt to have a specially instrumented program generated. This program is suitable for collaborative runtime verification: the programmer can execute the program collaboratively on multiple machines, where each machine will only observe events generated by a subset of instrumentation points. In addition, the programmer can configure these programs such that they will monitor certain instrumentation points only from time to time. This approach is suitable when it comes to testing the program in the field, and when the program is shipped to a large set of customers. Each customer will execute a slightly different program, which monitors a slightly different set of program points. In result, this monitoring approach cannot give any guarantees: due to the partial instrumentation, property violations may be missed. Nevertheless, our approach allows programmers to execute a partially instrumented program that runs fast, with a bounded runtime overhead, but still gives the programmers some opportunity to find property violations in this program. In particular, when programmers enable every single probe in at least one user’s program copy, this yield the same coverage as enabling all probes in a single program copy.

There are no special requirements on an analysis or runtime monitor so that it can benefit from Collaborative Runtime Verification. Any compatible runtime monitor will do, as will any static typestate analysis that uses Clara’s API correctly.

Summary

To summarize, Clara consists of the following key components:

- a front-end that can parse AspectJ aspects that are annotated with Dependency State Machines,

- a front-end that can read Java programs from both bytecode and source code (provided by abc),

- an extensible static-analysis engine to perform typestate analysis, and

- a code generator for Collaborative Runtime Verification.

Clara provides the following key features:

- a simple syntax for denoting typestate properties that is both human-readable and machine-readable,

- an analysis engine for static typestate analysis that comes with three pre-defined example analyses,

- the engine can automatically transform a runtime monitor to a more efficient residual monitor based on the analysis results

- and a code generator for Collaborative Runtime Verification.

These components use the following key abstractions:

- A monitoring aspect is an AspectJ aspect that contains a set of dependent advice and is annotated with a Dependency State Machine.

- A dependent advice is an advice that carries the “dependent” keyword. Clara will use its static analyses to modify a dependent piece of advice so that it only executes at relevant shadows. Clara does not modify pieces of advice that are not markes as “dependent”.

- A Dependency State Machine is a state-machine representation of a (finite-state) typestate property. In Clara, programmers denote a Dependency State Machine in a special syntax.

- A shadow is a program point at which a (dependent) piece of advice may execute. Shadows can be compatible with each other or not. We say that two shadows are incompatible if they bind the same variable v to two distinct objects; otherwise they are compatible.

- A object representative is a static abstraction of a runtime object.